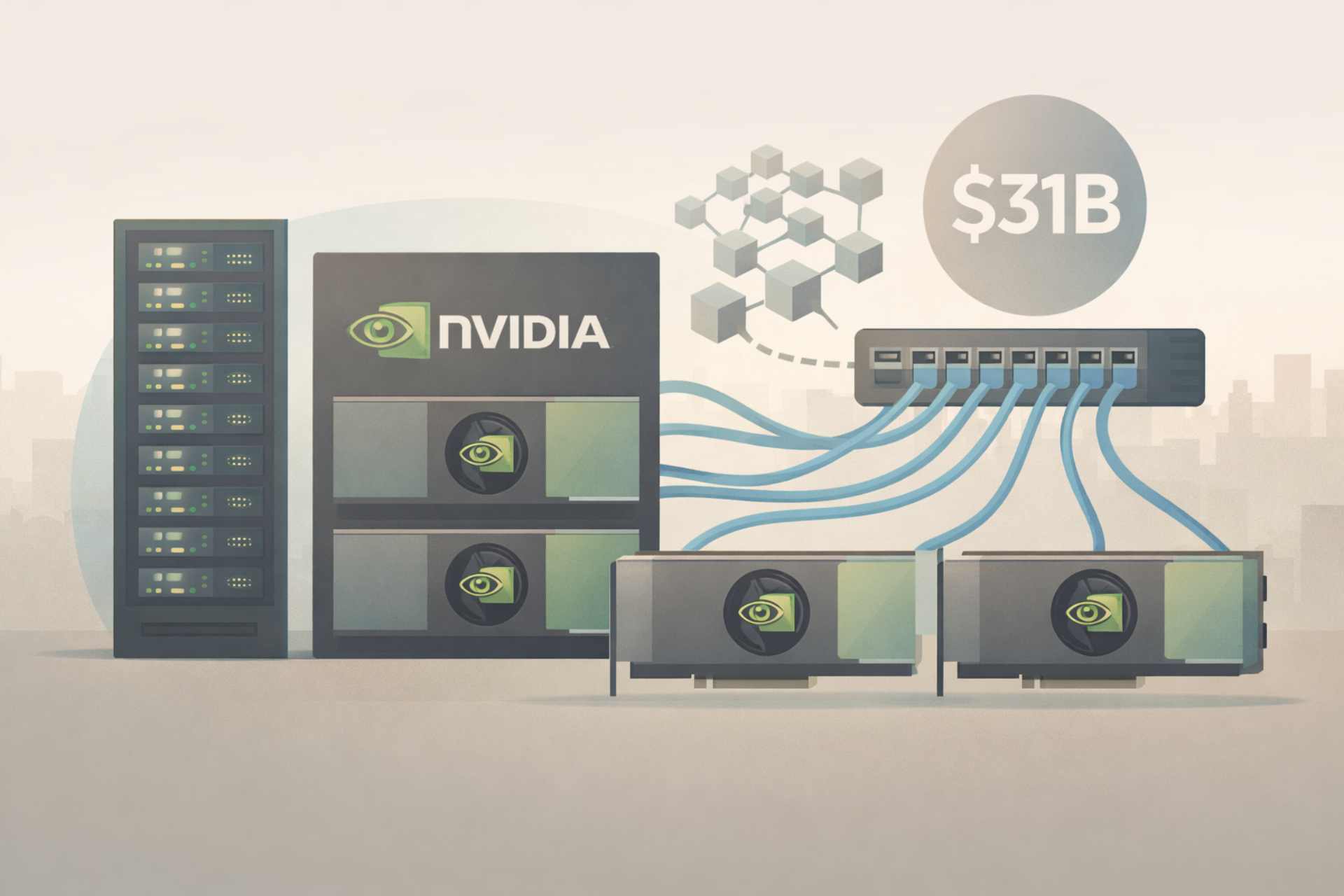

March 19, 2026 Nvidia’s data centre networking division has grown into a major business line, generating $11 billion in quarterly revenue and $31 billion for the full year. The unit has become the company’s second-largest revenue driver behind compute.

The growth stems from Nvidia’s push to build integrated systems for AI workloads, combining GPUs with high-performance networking to power large-scale data centres.

The networking business originated from Nvidia’s $7 billion acquisition of Mellanox in 2020, a move that expanded the company beyond chips into data transfer and interconnect technologies. Today, the division includes products such as NVLink, InfiniBand switches and Spectrum-X Ethernet platforms, which enable communication between GPUs and systems in AI-focused data centres.

According to Kevin Deierling, Nvidia’s senior vice-president of networking, Nvidia’s networking success can be largely attributed to its approach of selling the offering as a full-stack solution and also selling through its partners.

In his words: “I can’t think of other companies that have [the] full-stack capabilities that we have. We are really different. We build the full compute stack, fully integrated stack, and then we go to market through all of our partners.”

The scale of the business has drawn attention from analysts. Kevin Cook, a senior equity strategist at Zacks Investment Research, said Nvidia’s networking revenue in a single quarter now exceeds that of traditional networking competitors on an annual basis.

Despite this growth, the segment has received less attention than Nvidia’s core chip business. The company’s GPUs remain the primary driver of its AI dominance, while its gaming division continues to be a well-known legacy segment.

Nvidia’s strategy centres on delivering a full-stack AI infrastructure offering rather than individual components. By pairing its GPUs with proprietary networking technologies, the company can optimise performance across entire systems and sell integrated solutions through partners.

Recent product announcements reinforce that direction. At its GTC conference, Nvidia introduced updates including the Rubin platform, new inference memory systems and more efficient networking hardware designed for AI workloads.

The combination of compute and networking is increasingly positioned as a single system. As AI models scale, the ability to move data efficiently between processors has become as critical as the processors themselves.