March 23, 2026 This is another in our series of “Expert Voices” where we tap into our community of experienced IT professionals for advice, input and observations on technology. This is another piece from our regular contributor, Yogi Schulz.

Graph databases are the fastest-growing category of data management software. Graph databases have evolved into a mainstream technology that organizations across industries have successfully implemented to support a wide variety of applications. Organizations are attracted to graph databases to address big, complex data challenges that traditional databases, such as relational and NoSQL, are not well-suited to handle.

Selecting a graph database is an important decision that can advance your organization’s business plan. Because graph database software is relatively new and evolving rapidly, buyers often struggle to reconcile the conflicting claims made by different vendors.

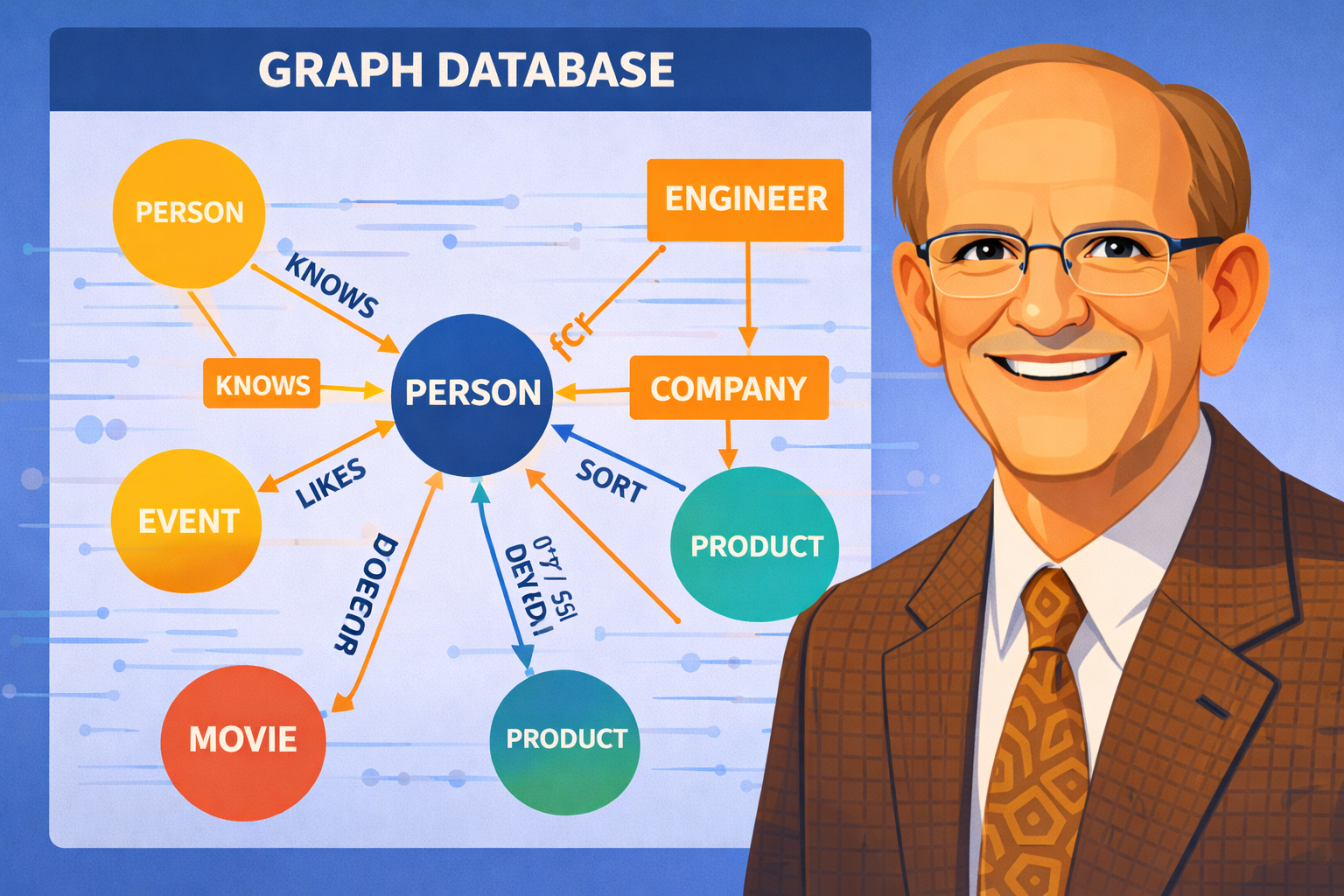

The value of graph databases lies in their ability to represent real-world objects and their relationships, making the schema easier to understand. To assist IT management in selecting graph database software, this article outlines the major selection criteria.

Functionality

The functionality of a graph database software package refers to the range of operations it supports and is a critical part of the overall evaluation.

Query execution speed

Query execution speed enables querying large data volumes and producing results within short response times.

Query execution speed is often the most important selection criterion, as graph databases are used in applications that need to process large data volumes quickly. Lengthy query execution times mean processing occurs after the fact, and results will require follow-up rather than immediate resolution.

Update and insert execution speed

Update and insert execution speeds are key functions for quickly updating graph databases.

Update and insert speeds are important because database updates compete with active queries for server resources. Organizations try to minimize these periods of competition because they slow down response time. Read replicas, described below, can also substantially reduce this competition.

Scalability

Scalability is the ability to distribute graph databases, via partitioning, across multiple physical servers that comprise a cluster.

Scalability is important because large graph databases often exceed the storage capacity of a single physical server. Inadequate scalability can severely limit the size of a graph database.

Ease-of-use

Graph databases from different vendors vary considerably in the ease-of-use of various parts of their functionality intended for use by the following typical categories of end-users:

- Business analysts and software developers use the query development and execution environment.

- Software developers spend much of their time using an integrated development environment (IDE) to develop application software that loads, updates, and integrates data in graph databases.

- Database administrators (DBAs) use the database management environment to monitor and manage the operation of the graph databases.

- Data modellers use a database management environment to implement and revise the schemas of graph databases.

Ease-of-use of the functionality associated with the graph database software is important because it determines:

- How productive and effective the staff will be when interacting with the database.

- The extent to which the graph database will achieve its availability target.

Technology

Evaluating the information technology incorporated into graph database software packages under consideration requires considerable expertise and is a critical part of the overall evaluation.

Artificial intelligence support

The boom in the use of artificial intelligence (AI) is creating an insatiable demand for graph databases capable of handling and processing sophisticated workloads.

Graph databases are better than relational databases for:

- Handling a mix of structured and unstructured data.

- Revealing connected patterns that deliver inferences.

- Supporting the drive to semantic understanding and reasoning.

Graph databases enhance AI applications by providing context-aware data relationships that relational databases cannot. This feature improves accuracy, explainability, and performance.

Because the requirement to support AI applications is so new, graph databases vary in their ability to do so.

Graph query language

Graph databases from different vendors use different query languages, unlike relational databases, which largely use standardized SQL. These graph query languages, often like SQL, vary in their capability, including the extent of their:

- Turing-completeness.

- Ability to express graph computations.

- Ability to process analytics natively.

- Support for ad hoc queries.

- Support for complex, parameterized procedures.

Insufficient capability adds to the effort required to develop software before an application can be used routinely in production.

For general graph database queries and pattern matching, declarative languages such as Cypher and the new standard GQL are well-suited. For more specific or complex requirements, SPARQL, Gremlin or GraphQL may be more suitable.

In-database analytics

In-database analytics is the technology that processes analytics within the database engine.

The absence of this analytics technology necessitates that analytics processing occur externally. That processing adds infrastructure cost, complexity, and elapsed time to complete tasks.

Integrated development environment

Graph databases from different vendors vary in the scope and functionality of their IDEs. Desirable functionality includes:

- A visual interface, rather than a command-line interface, for exploration, update, and query development.

- Visual data modeling.

- Extract, transform, and load (ETL) support.

- Support for monitoring and management of graph databases.

The absence of sufficient IDE functionality reduces software developer productivity and leads to:

- Additional software development effort being required before an application can be used routinely in production.

- Longer elapsed times to implement enhancements and resolve software defects.

Data loading performance

Graph databases from different vendors vary in resource consumption and in the time required to perform common bulk data-loading tasks. The range of supported input data formats is a related selection criterion.

The lack of high-performance bulk data loading will slow the graph database’s overall performance.

Database engine design features

Graph databases from different vendors vary in their database engine design choices. Major design features include:

- Distributed or multi-node graph storage offers a much higher ultimate limit on database size compared to single-node graph storage.

- Massively parallel processing offers a much higher limit on the number of queries and updates that can be performed concurrently compared to limited or no parallel processing.

- Compressed data storage offers a higher ultimate limit on database size compared to uncompressed data storage.

- Schema-first design optimizes query performance compared to schema-free design.

- Schema-free design reduces the effort and disruption associated with data structure changes compared to schema-first design.

- Read replicas allow separating the query load from the update load onto separate server clusters, significantly increasing the point at which the graph database’s update performance starts to slow.

Deep-link analytics

Deep-link analytics is the technology that supports query and insert processing for complex data structures. Processing complex data structures requires the ability to handle 5 to 10+ hops or relationships efficiently across all database sizes.

Deep-link analytics is important because, without it, query and insert execution speeds deteriorate markedly with more hops, or the query cannot be processed at all.

In-database machine learning

In-database machine learning is the technology that runs machine learning algorithms within the database engine.

The absence of this technology requires machine learning processing to occur externally. That processing adds infrastructure cost, complexity, and elapsed time to complete tasks.

Transaction and cluster consistency

Graph databases from different vendors differ in the technology they use to guarantee transaction processing. This concept is called atomicity, consistency, isolation, and durability (ACID).

Maintaining ACID compliance is more complex in a server cluster than in a single server. The absence of ACID technology for a cluster introduces a severe limitation on the size and complexity of applications.

Graph algorithm library

Graph databases from different vendors vary in the scope and technology of the algorithm libraries they provide. Example capabilities include:

- Number or richness of graph algorithms.

- Extensibility of graph algorithms through code.

- Customizability of graph algorithms through parameters.

Software developers like to incorporate components from libraries into their applications. This library greatly reduces the effort required for software development and testing.

The absence of sufficient algorithms can lead to significant additional software development and testing effort before an application can be used routinely in production.

Standard APIs

Graph databases from different vendors vary in their support for industry standards such as REST APIs, JSON output, JDBC, Python, and Spark.

The absence of sufficient support for industry standards can lead to significant additional software development and testing effort before an application can be used routinely in production.

OLAP and OLTP workloads

Graph databases from different vendors vary in the technology available to support both OLAP and OLTP workloads.

The design of graph databases is strongly oriented toward efficiently handling large online analytical processing (OLAP) workloads.

However, as graph databases gain prominence in organizations, they will inevitably be expected to handle online transaction processing (OLTP) workloads.

The lack of robust OLTP support means the OLTP workload will consume more server resources, slowing the graph database’s overall performance. Alternatively, organizations can address this OLTP issue by implementing a policy that prohibits the use of graph databases for OLTP workloads.

The features that graph databases offer for complex applications appeal to organizations to advance their business plans. These advantages are driving rapid increases in sales and adoption.