March 26, 2026 OpenAI has indefinitely paused plans to launch a sexualised “adult mode” for ChatGPT following internal and external concerns about potential societal harm. The decision is part of a shift to prioritise core products as competition intensifies from rivals such as Anthropic and Google.

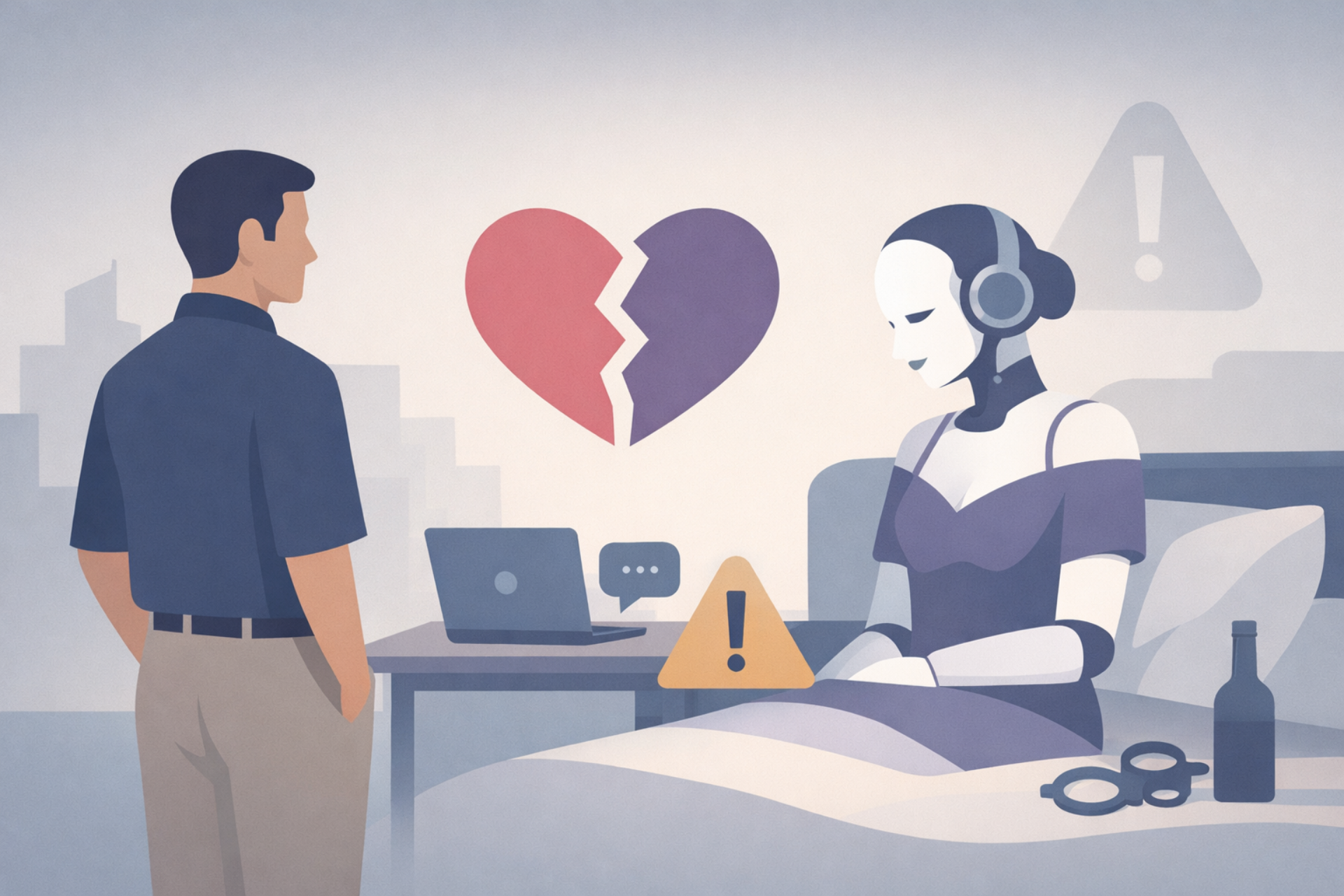

The move follows pushback from employees, advisers and investors, with concerns ranging from content moderation to the risks of emotional attachment to AI systems. One adviser warned internally that the company could risk creating a “sexy suicide coach,” highlighting the sensitivity of deploying such features at scale.

The proposed feature, first discussed publicly by CEO Sam Altman in October, had already faced multiple delays. According to reports, OpenAI now plans to conduct further research into the long-term effects of sexually explicit AI interactions before revisiting any product decision, noting there is currently no empirical evidence guiding those risks.

The pause comes amid a strategic reset. OpenAI has recently deprioritised or discontinued several initiatives, including its AI video generator Sora and an “Instant Checkout” feature designed to turn ChatGPT into an e-commerce interface. Both moves were linked to internal discussions about focusing resources on higher-priority areas.

That shift aligns with a reported “code red” issued by Altman late last year, urging teams to refocus on ChatGPT and core capabilities. Internally, leadership has pushed to prioritise three areas: improving coding models such as Codex, strengthening enterprise adoption and positioning ChatGPT as a productivity tool.

Competitive pressure is a key factor. Anthropic has gained traction with business-focused AI tools, while Google continues to compete aggressively in consumer AI. OpenAI has also expanded into enterprise and government work, including a $200 million contract with the U.S. Department of Defense, as it seeks more stable revenue streams.

The adult mode controversy also underscores governance challenges around AI deployment. Concerns raised internally and externally are based on safety, content boundaries and the unintended consequences of increasingly human-like interactions with AI systems.