Researchers from MIT, KAUST, ISTA, and Yandex have unveiled a breakthrough in compressing large language models (LLMs), potentially enabling them to run on everyday consumer hardware without major performance loss.

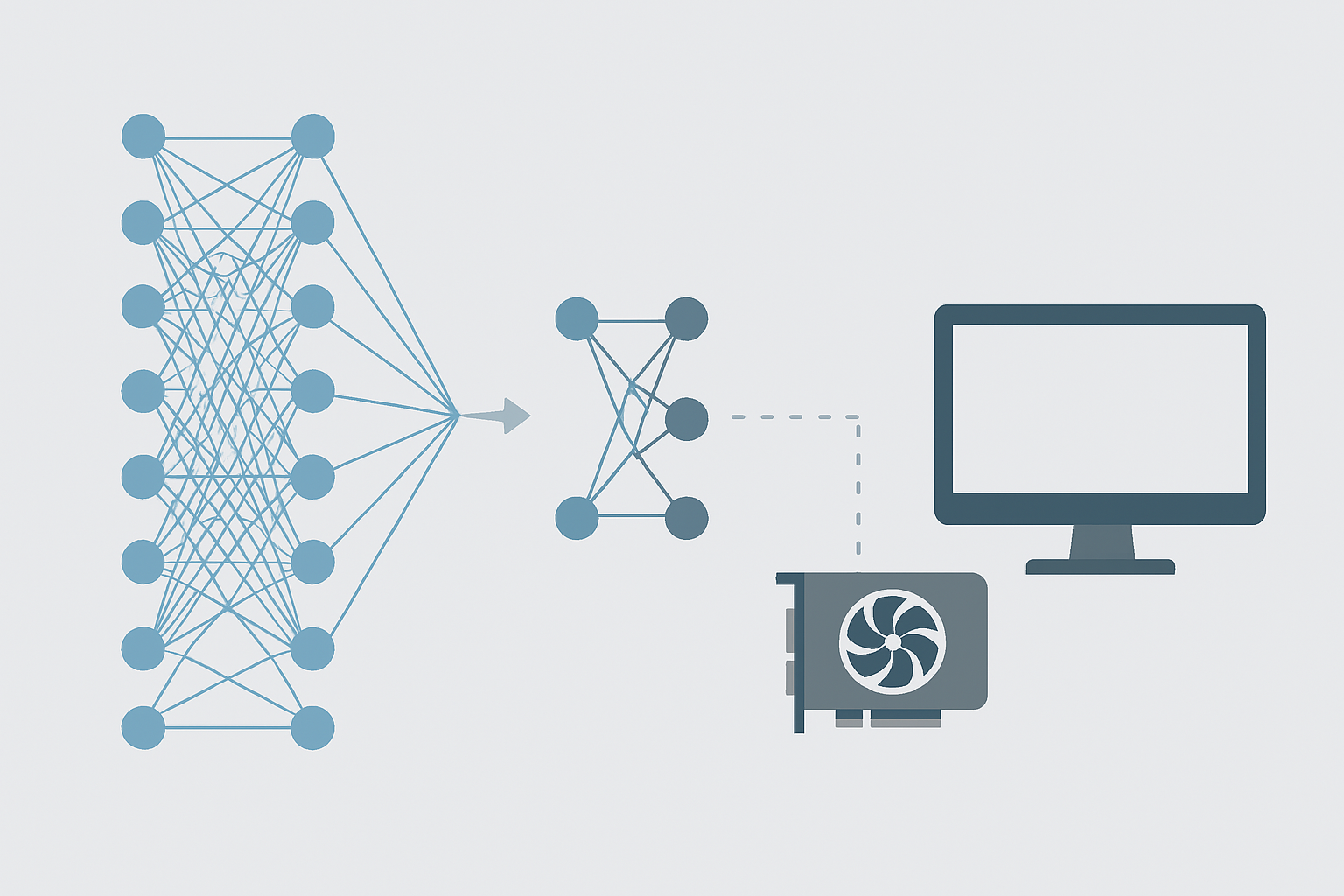

The new method, called ZeroQuant-V2, is a quantization technique that reduces the memory footprint of LLMs by lowering the precision of weights and activations within the neural network. This approach reportedly slashes memory usage by up to 50%, while retaining over 95% of the model’s original accuracy on standard benchmarks.

Unlike many compression techniques that require retraining or specialized tuning, ZeroQuant-V2 is training-free and plug-and-play, designed to work out-of-the-box across a range of architectures including LLaMA, OPT, and GPT-style models.

This development could significantly lower the barrier to running powerful language models on edge devices or consumer-grade GPUs — including setups without high-end cloud infrastructure or enterprise-grade compute. That opens the door for smaller companies, researchers, and even individual developers to work with advanced AI locally.

As LLMs grow more powerful, so do their hardware demands. ZeroQuant-V2 represents a step toward democratizing access to AI, bringing capabilities once limited to data centers into reach for low-resource environments. The method could also reduce costs and latency for AI applications at the edge, particularly in privacy-sensitive or offline scenarios.

Here is a link to the paper: https://proceedings.neurips.cc/paper_files/paper/2022/file/adf7fa39d65e2983d724ff7da57f00ac-Paper-Conference.pdf